I had a different piece planned for this issue. State bar AI disclosure rules — the kind of compliance-adjacent analysis that’s always useful for a legal audience and that, in any other month, would have been the natural fit. It was researched, half-drafted, and almost ready to finish. I shelved it.

Between April 4 and April 27, four storylines played out across legal AI in the United States, the United Kingdom, and continental Europe. Each was covered separately by trade press. Artificial Lawyer had its beat. Law.com had its. Above the Law had its angle. Bloomberg Law and Reuters had their respective desks. None of them stitched the four stories together, and structurally they wouldn’t have, because each story belongs to a different reporter on a different desk on a different day. That’s how legal news has always worked.

But sitting on top of all four reading them in sequence is a fundamentally different experience than reading any one of them in isolation. It’s not four pieces of news. It’s one story with four chapters. And the through-line, the thing that ties them together once you stop treating them as discrete events, is that legal AI changed phase in April.

I’m aware that “phase change” is the kind of language that gets thrown around in this space without much weight behind it. I’m using it deliberately. By phase change, I mean something specific: in 30 days, the legal market crossed thresholds in adoption, risk, coordination, and execution simultaneously, and crossed them in a way that makes the prior thirty months of careful pilot programs and committee reviews look like a different era of the conversation. This is my read of what April 2026 actually was.

Not as a recap. As a market signal. Ten years from now, when someone asks when legal AI stopped feeling theoretical, April 2026 is going to be one of the cleanest answers we have.

This is the first edition of a new monthly format. At the end of every month from now on, I’ll step back from the daily noise and pull together the legal AI stories that actually matter — not every product launch, not every vendor announcement, not every think piece, just the moves that, when read together, change how law firms should think about strategy, risk, operations, and client acquisition. I know most lawyers and firm leaders are busy. The point of this format is that you should not have to follow forty legal tech stories a month to understand what is happening to your market. You should be able to read one piece and walk away with the map.

Now to April.

The Frame

In thirty days, the legal industry told us four different things at the same time. Each storyline alone was a notable news event. Read together, they form the four stages of a single disruption. Let me name them before I walk through them in detail.

The first storyline is infrastructure committed. A Magic Circle firm and an Am Law top-30 firm publicly declared that AI is no longer an experiment. It’s infrastructure. Two firms, two different vendor strategies, and one operating thesis: this is not a tool we use, this is a layer we run on. The conversation about whether to adopt AI ended at the top of the market. The conversation about how fast and how deep replaced it.

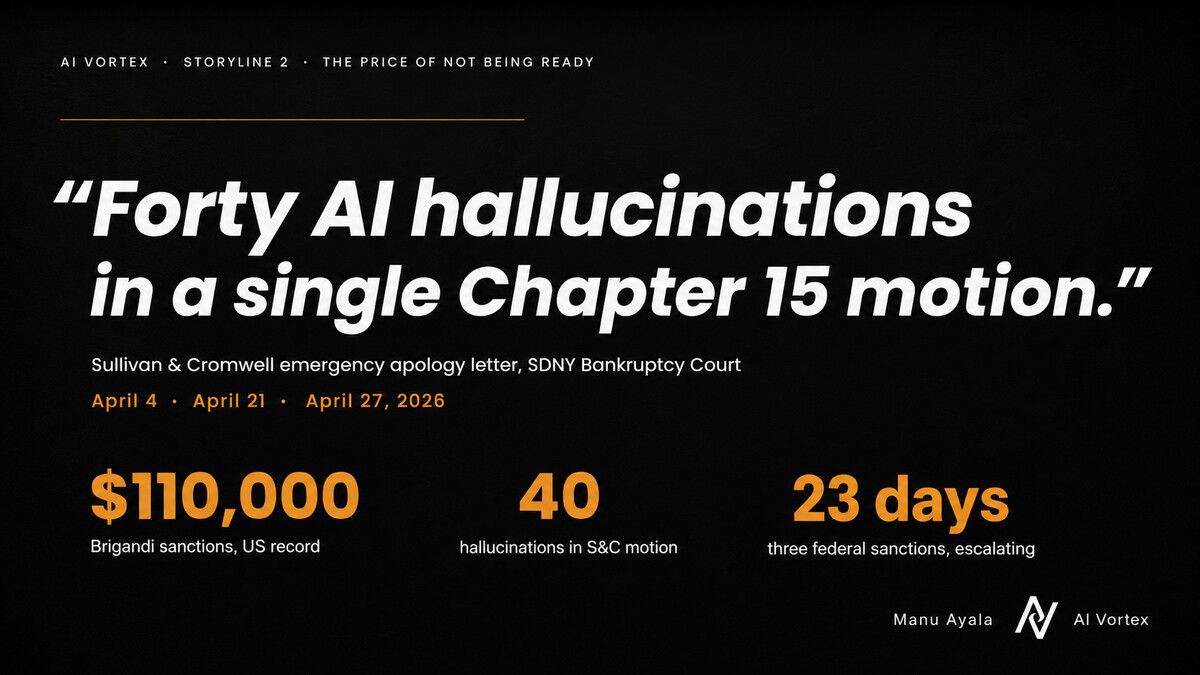

The second storyline is the price of not being ready. Three federal sanctions stories landed in 23 days, escalating in dollar amount, visibility, and reputational cost. The biggest involved Sullivan & Cromwell — Am Law top 10, Magic Circle peer, the most discreet firm in legal — which filed an emergency apology letter to a federal bankruptcy judge after approximately forty AI hallucinations were found in a single Chapter 15 motion. The “associates and small firms are the reckless ones with AI” framing died in April. It cannot survive what happened at S&C.

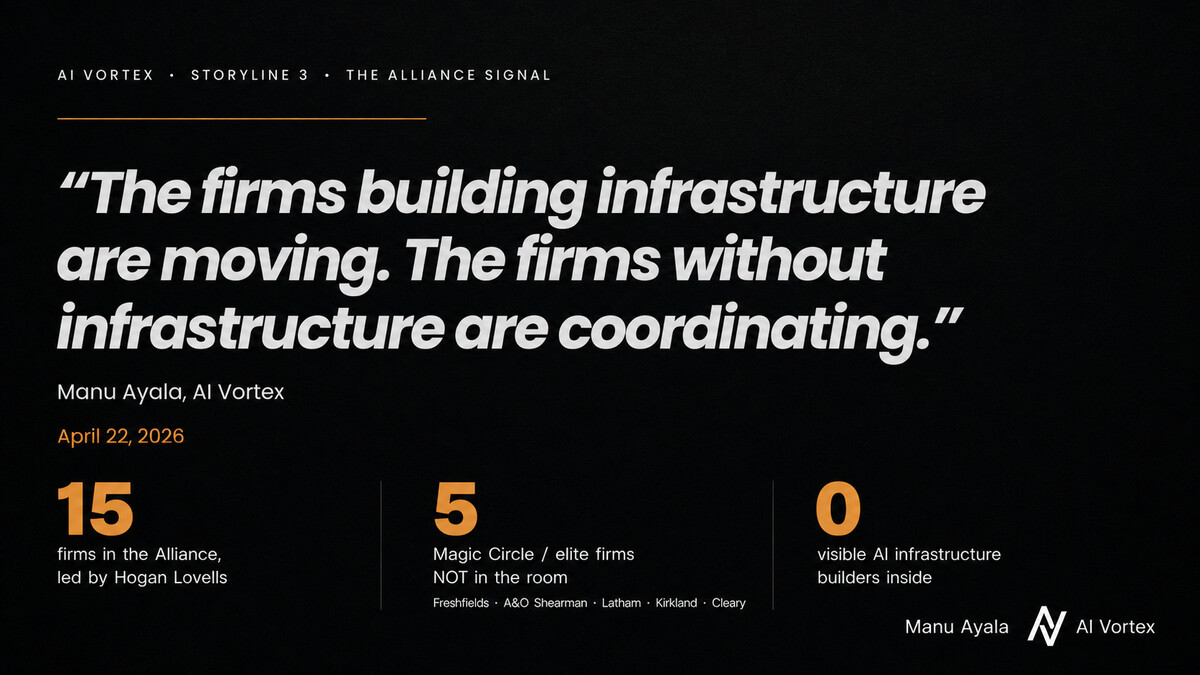

The third storyline is the alliance signal. Fifteen firms led by Hogan Lovells formed the Global Legal Tech Alliance to “shape the future of AI-enabled legal services.” The announcement got polite coverage. What it didn’t get was the obvious second read. On the same day they announced the alliance, the firms most actively building, productizing, or operating AI infrastructure — Freshfields, A&O Shearman, Latham, Kirkland, Cleary — were not in the room. That absence is the more interesting story.

The fourth storyline is agentic shipped. Clio launched Vincent, an agentic system explicitly framed as end-to-end legal execution rather than assistance. Harvey published GPT-5.5 evaluations at 91.7% on its BigLaw Bench and quietly broadened its model stack to include Google and Anthropic alongside OpenAI. The language shifted from assistance to execution. That word change is more important than most observers acknowledged at the time.

Each of those four stories is news on its own. Stitched together, they describe four stages of the same disruption playing out simultaneously: firms committing to infrastructure, firms paying for not having it, firms coordinating around the gap, and the technology that makes infrastructure mandatory rather than optional shipping in real time. The firms that don’t see them as connected are the firms most exposed to what’s actually happening underneath.

Let me walk you through them in order, with the detail each one deserves.

Storyline 1: Infrastructure Committed

On April 15, Freshfields published the year-one update of its strategic partnership with Google Cloud. The update was framed as a milestone announcement — a year into a multi-year deal originally signed in April 2025 — but the actual content of the announcement was a phase change disguised as a press release.

The headline quote, given by Lukas Treichl, Co-Head of Freshfields Lab, was unambiguous:

“A year into our Google Cloud partnership, Gemini is no longer an experiment at Freshfields, it is infrastructure.”

That sentence sounds like marketing at first read. It isn’t. Lawyers and law firm leaders have been calling AI “experimental” for almost three years now. Treichl drew a line in public. What Freshfields uses Gemini for is no longer a pilot, no longer an experiment, no longer an exploration. It is infrastructure — meaning the firm depends on it, governs it, and treats it as a permanent part of how legal work happens at the firm.

The numbers underneath the headline back the framing. More than 5,000 of Freshfields’ approximately 5,700 firmwide professionals are now using AI tools built on Gemini. There are 2,800 Google Workspace seats live. NotebookLM Enterprise has 2,100 daily users at the firm. There are 260 internal AI Champions distributing governance responsibilities across the firm’s 33 offices. And the most important detail, the one buried under the more headline-friendly user counts, is the existence of Freshfields Lab — a custom internal application layer, built in-house, that sits above the foundation models and defines how Freshfields’ lawyers actually use AI in their work.

That last detail is the strategic move. The vendor underneath is interchangeable. The application layer is not.

Eight days later, on April 23, Freshfields announced a parallel multi-year deal with Anthropic. Claude was deployed wall-to-wall across all 33 offices. Anthropic’s leadership reported that adoption of Claude inside Freshfields rose roughly 500% in the first six weeks of internal deployment — which means the operational integration had been running quietly for about a month and a half before the public announcement.

Trade press treated the two announcements as competing vendor wins. Google scored Freshfields in mid-April. Then Anthropic scored Freshfields. Two stories, two press releases, two vendor narratives. That framing is wrong, and the reason it’s wrong matters.

Freshfields did not pick Google. Freshfields did not pick Anthropic. Freshfields picked itself.

The thesis I’ve been writing for several months now is simple: in the legal AI stack, the foundation model layer is commodifying, and the application layer is where moats get built. April 15 and April 23 gave that thesis Magic Circle proof. Freshfields is acting as if the strategic question is not which foundation model wins. They are acting as if the strategic question is who controls the layer where legal work actually happens. Google can sit underneath that layer today. Anthropic can sit underneath it today. Whichever foundation model wins next year’s eval can sit underneath it then. The point is not model loyalty. The point is architectural control.

That distinction is the difference between a vendor strategy and an infrastructure strategy. A vendor strategy locks a firm into pricing power it does not control. An infrastructure strategy treats the vendor as a layer that can be swapped. One is procurement. The other is architecture. Vendor strategies are useful as intermediate steps. They are not durable as endgames, because they assume the foundation model market will stop moving and the firm’s bargaining position will stay intact across renewal cycles. Neither assumption holds.

Then April 27 happened.

Goodwin Procter, an Am Law top-30 firm in the United States with approximately 1,800 lawyers, publicly committed to becoming “AI-native” and reportedly targeted 90% AI adoption by year-end. Goodwin has not yet disclosed the kind of architectural detail Freshfields did. We don’t yet know whether they are building the equivalent of Freshfields Lab, whether they are running multiple foundation models, or whether their adoption number is measured by daily active users, weekly use, or simple license counts. Those details matter, and they will determine where Goodwin actually sits on the map I’ll lay out later in this piece.

But the public commitment matters even before those details are clear. The “should we adopt AI?” conversation is over at the top of the market. The question is now: how fast, how deep, and under whose control.

If you are a managing partner reading this and your firm is still running pilot programs and committee reviews on whether AI should become part of core operations, you are not behind on adoption. You are behind on phase. The firms that won April were not asking if. They were declaring by when.

Storyline 2: The Price of Not Being Ready

While Freshfields and Goodwin were declaring infrastructure on the supply side, the courts were pricing what happens when firms don’t get there in time. Three sanctions stories landed in twenty-three days, and each one moved the public benchmark for what AI failure looks like in legal practice.

On April 4, in Oregon, U.S. Magistrate Judge Mark Clarke sanctioned San Diego attorney Stephen Brigandi $96,000 for AI hallucinations that appeared in a federal filing. With co-counsel sanctions added in, the total reportedly exceeded $110,000. The Brigandi sanction is, as best as anyone tracking this can tell, the most expensive AI hallucination penalty in U.S. legal history. The judge’s order made clear that this was not a one-off mistake by an inexperienced attorney. It was a sustained failure of verification that the court found unacceptable.

Then, on April 21, in the Southern District of New York Bankruptcy Court, Sullivan & Cromwell filed an emergency apology letter to Chief Judge Martin Glenn after AI-generated errors were found in a Chapter 15 motion in the Prince Global Holdings matter. According to reporting from Reuters and Above the Law, the errors included fabricated citations and misstatements of law, and S&C acknowledged that its internal AI use policies and secondary review process had not been followed in the preparation of the filing.

This was not a solo attorney working alone in a small market. This was not an unsophisticated shop running ChatGPT on a personal laptop. This was Sullivan & Cromwell — Am Law top 10, the lead advisor on some of the largest M&A and restructuring transactions of the past decade, and one of the most discreet firms in the entire legal industry. The story broke out of legal media and into mainstream business coverage within days. CNN ran it. Bloomberg ran it. Reuters ran it. AI hallucinations at the very top of the legal market were no longer a niche ethics story. They became a reputational event that any sophisticated client would now be aware of.

Six days later, on April 27, in Cherry Hill, New Jersey, attorney Raja Rajan was reportedly sanctioned $5,000 for AI hallucinations. The dollar amount was modest by comparison with the Brigandi case, but the more important detail is that this was Rajan’s second appearance in front of the same judge for the same kind of failure. The judge warned that any further incidents would be referred to the bar. Sanctions, as a category, are now beginning to escalate not just in absolute amounts but in disciplinary consequence.

Three sanctions stories. Twenty-three days. The dollar amounts and reputational consequences are not random. The curve is not flattening. It is steepening.

Here is what most attorneys I talk to are still getting wrong about this trajectory.

It is not, fundamentally, a tooling failure. S&C had policies. Written policies. Distributed policies. Mandatory training. Internal AI usage guidelines that were part of a responsible adoption framework the firm had been building for over a year. That is what most managers think governance means: a document, a training session, a paragraph in a technology use policy, a sign-off form. By that standard, S&C had governance.

But the issue exposed in April was not whether a policy existed. The issue was whether governance existed inside the workflow.

There is a difference between writing a policy and operationalizing one. That difference is exactly what Freshfields appears to be building toward with Freshfields Lab and the 260 AI Champions program. Policy says: verify AI output before filing. Infrastructure makes verification unavoidable. Policy says: lawyers remain responsible for what they sign. Infrastructure creates the audit trail showing who checked what, when, against which authoritative source, before filing. Policy lives in a PDF that nobody reads after the all-hands meeting. Infrastructure lives in the workflow where the work actually happens.

That is the connection between Storyline 1 and Storyline 2. The firms committing to infrastructure are working to turn AI adoption into revenue, margin, and operational leverage. The firms leaving governance on a Word document are discovering that the invoice for that gap eventually comes from the court. And the gap is widening every month, not closing. The same gap is now being priced on the revenue side too — KPMG demanded a 14% AI-driven discount from its own auditor in April, and every General Counsel just got the script.

If your firm has a 12-page AI usage policy and no verification layer in the actual filing workflow, you have the appearance of governance without the machinery of governance. That is the dangerous middle. It looks responsible from the outside. It does not protect you when the test arrives.

April made that distinction visible at every level of the market simultaneously. The Brigandi case exposed it for solo and small-firm practitioners. The S&C case exposed it for elite institutions. The Cherry Hill case exposed it for repeat offenders who didn’t learn from the first warning. Three different segments. One shared structural failure.

Storyline 3: The Alliance Signal

On April 22, Hogan Lovells announced that it had joined the Global Legal Tech Alliance, a network of more than fifteen international law firms created to “shape the future of AI-enabled legal services.” The announcement read exactly the way you would expect this kind of announcement to read. Collaboration. Knowledge-sharing. Standards. Responsible innovation. A law-firm-led platform.

All of that language matters, and the alliance itself is not unworthy of attention. Standards bodies and coordination groups can produce useful frameworks. They can create shared language that makes individual firm decisions easier to communicate to clients and regulators. They can help firms avoid making the same mistakes one at a time. None of that is bad work.

But I read the announcement and immediately did the only thing that mattered, which is check who was not in the room.

Not in the alliance: Freshfields, which had just declared AI infrastructure status across its operations. A&O Shearman, which is revenue-sharing with Harvey on agentic legal tools — meaning they are not just buying legal AI but co-developing it for resale, an arrangement that fundamentally changes the firm’s economic relationship with the technology. Latham & Watkins, which has thousands of Harvey licenses deployed firmwide. Kirkland & Ellis, the silent giant of the Am Law top tier, whose AI strategy is mostly invisible to the public but whose talent flows with Harvey suggest active engagement on both sides of the deployment fence. Cleary Gottlieb, which just launched ClearyX CX+ as an external product sold beyond its own four walls.

Five firms. Each one in a different stage of building, productizing, or commercially deploying AI infrastructure. None of them in the alliance.

The firms building infrastructure are moving. The firms without infrastructure are coordinating. Both responses are rational. They are not the same response, and they will not produce the same outcomes.

That absence is the more interesting story. It is not a story about whether the alliance is well-intentioned or whether the work it produces will be useful. It almost certainly will be both. The story is structural: when the most exposed and most active firms are not at the table where standards are being shaped, you have to ask what kind of table it is.

My read is that the alliance is rationally a hedge, not a build. Coordination is what established players do when the market starts moving faster than any individual institution can comfortably absorb on its own. It is the structural pattern that has historically preceded every regulatory capture attempt in adjacent industries. The American Medical Association did this with managed care. State bar associations did this with law firm advertising in the 1970s and again with attorney technology advertising in the 2010s. The accounting profession did this when cloud audit became unavoidable. The pattern is consistent: when established players feel the disruption arriving and cannot individually build their way out, they coordinate to slow the curve and to write rules that protect them from new entrants moving faster than the regulated market expects.

I am not suggesting the Global Legal Tech Alliance has bad intent. I am suggesting that read in context, with the dates of the announcements next to one another, the alliance’s composition tells you what kind of move this is.

If your firm is in the alliance, ask yourself honestly: are you in it because you are building something the alliance helps you scale, or because you are trying to slow the pace at which the market reorganizes around firms that have already built? If it is the second, the alliance is theater. The actual work — which is moving governance from policy document to workflow infrastructure — still has to happen inside your own walls, regardless of how many cross-firm working groups your name appears on.

A standards body cannot own your application layer. An alliance cannot create your verification workflow. A committee cannot answer your client when they ask, in writing, how AI is being used on their matter. Coordination is useful. Infrastructure is decisive. April’s third signal was the reminder of which is which.

Storyline 4: Agentic Shipped

While the first three storylines played out across the policy, infrastructure, and coordination layers of the industry, the technology underneath was quietly shipping the capability that would make all the prior conversations either obsolete or urgent depending on which side of the line your firm was on.

On April 24, Harvey published its GPT-5.5 evaluation results. On Harvey’s BigLaw Bench, which is the internal benchmark Harvey uses to measure model performance against legal tasks, GPT-5.5 scored 91.7%, up from 91.0% on GPT-5.4. The gap sounds small. It is not small if you understand what is being measured.

At the bottom and middle of the legal AI accuracy curve, the question being benchmarked is “can the model produce coherent legal language?” That question was answered in the affirmative roughly two years ago. At the top of the curve, where Harvey, CoCounsel, Legora, and a handful of others are competing, the question is fundamentally different. It is not whether the model can produce language. It is whether the model can operate reliably inside legal process — across complex, multi-step workflows that include risk assessment, litigation analysis, deal management, document review, and increasingly, autonomous execution. Each percentage point at the top of the curve corresponds to a reduction in human review burden and an expansion of the work AI can do without supervision. A 91.7% accuracy threshold, if it holds across real-world legal tasks rather than benchmark tasks, represents a meaningful expansion of the work that can be delegated to the system.

Harvey also continued moving toward a multi-model stack. The same eval announcement noted that Harvey now offers Google’s and Anthropic’s models alongside OpenAI’s foundation models inside its enterprise product. That positions Harvey not just as a model wrapper but as an orchestration layer — a deliberate echo of what Freshfields is doing internally, applied at the vendor level rather than the firm level.

Three days later, on April 27, Clio pushed Vincent further into agentic territory. The phrase Clio’s marketing used was “end-to-end legal execution.” Not assistance. Execution.

That word matters more than most readers initially gave it credit for.

Until April, almost every major legal AI tool was framed around assistance. Harvey assists. CoCounsel assists. Lexis+ AI assists. Spellbook assists. The lawyer remains the operator. The AI is leverage. The supervision question is operational: did you check the work before submitting it? That framing fits comfortably inside existing professional responsibility frameworks because it preserves the lawyer as the actor and the technology as the tool.

Agentic execution changes the structure. The lawyer is no longer the operator. The lawyer becomes the supervisor of a system that can plan, sequence, retrieve, draft, analyze, and execute multi-step legal work. That is not just a product shift. It is a governance shift, an economic shift, and a professional responsibility shift. The supervision question changes from “did you check the work?” to “is this work even yours under the relevant professional rules?” — and that is a substantively different question that the existing rules of professional conduct were not written to answer.

This is where the four storylines start collapsing into one another in a way that becomes hard to ignore. Freshfields declared infrastructure. The courts priced the gap between policy and infrastructure. The non-infrastructure middle of the market started coordinating through alliances. And while all of that was happening, Clio and Harvey were quietly shipping the technology that makes infrastructure mandatory rather than optional. If agentic execution becomes the norm rather than the exception, then “we have an AI policy” stops being a serious defense for any firm that is operating an agent. The old policy assumed a human operator using AI to assist with their own work. The new model assumes a human supervisor overseeing AI execution of work the supervisor did not personally perform. Those are not the same risk model. They do not require the same controls. They do not create the same audit trail. They do not carry the same client disclosure burden, and they almost certainly do not create the same malpractice exposure.

The first serious unauthorized practice of law fight against a firm operating an agentic legal system no longer feels theoretical to me. My read is that we are closer to three months out than to twelve. I would not be surprised if it happens sooner.

The Pattern Nobody Is Drawing

Phase changes in markets do not announce themselves cleanly. The water does not tell you when it is boiling. The bubbles do.

Four bubbles surfaced in April. Read them stitched together, and you see something more useful than four discrete events. You see four stages of the same disruption playing out across the market in parallel.

Stage 1: Asking which tool

This is where most of the legal market still sits. Firms in Stage 1 are comparing Harvey to Spellbook, debating Copilot pricing, asking whether Claude is better than Gemini for legal work, evaluating whether to standardize associates on a single platform or run multiple in parallel. The Stage 1 conversation lives entirely in the procurement layer. It is useful, it is necessary, and it is shallow. The firms in Stage 1 are not yet engaging with the structural questions about who owns the layer where work happens.

Stage 2: Paying for not knowing

The sanctions stories from April live here. Firms in Stage 2 have moved past Stage 1 — they are using AI in some operational capacity — but they have not built the infrastructure to use it safely. The bill for the gap is now coming due, and the size of the bill is increasing month over month. Brigandi paid roughly $96,000 in April. The Cherry Hill repeat offender is a bar referral away from worse. S&C paid in reputation rather than in dollars, but the reputational invoice may turn out to be the more expensive one over time.

Stage 3: Hedging through coordination

This is where alliances start looking attractive to managing partners. The disruption is undeniable. The internal infrastructure is not yet built. The capital and conviction needed to build it independently are not available, or the firm leadership is unwilling to make the bets. So the firm coordinates with peers. It joins working groups. It signs alliance charters. It contributes to standards conversations. Sometimes that work helps. Sometimes it slows the inevitable. Usually it does both. The honest read is that Stage 3 is a useful intermediate state, but it is not a destination.

Stage 4: Building infrastructure

This is where Freshfields is operating now. This is where ClearyX is becoming interesting as an external product. This is where Reed Smith’s Gravity Stack matters, having spent years quietly building the capability that is now being marketed externally. This is where Macfarlanes’ Amplify product, built on Harvey infrastructure but sold to Macfarlanes’ own clients, is sitting. This is where the AI-native firms — Crosby, Norm Law, General Legal, the wave of YC-backed and venture-backed legal startups — were born. At Stage 4, the firm has stopped asking which chatbot to buy. It is asking which layer of the stack it owns.

The honest question for any managing partner is not “do we use AI?” The honest question is “what stage are we actually in?” And then, more uncomfortably: what stage are we saying we are in publicly versus what stage are we operating in privately? The gap between those two answers is where the risk lives. A firm that publicly claims Stage 3 readiness while privately operating at Stage 1 is a firm that will not survive its first significant client AI disclosure request without an emergency intervention. If you want a more granular self-diagnostic across governance, infrastructure, workflow, and compliance dimensions, the AI Vortex 5-Level Adoption framework breaks down where firms typically stand on each.

What the Demand Side Says

I want to ground this analysis in something I see that most people writing about legal AI do not. I run a property called AI Vortex. It is cited inside every major AI engine when lawyers ask AI assistants about legal AI — ChatGPT, Claude, Gemini, Microsoft Copilot, and inside Microsoft Word’s integrated assistant. Across those channels, I see what lawyers are actually searching for, every day, in mechanically regular patterns.

What I see, consistently, every month, is that the top queries are tool comparisons. “Harvey pricing.” “Spellbook vs Harvey.” “Claude for legal.” “Copilot for lawyers.” “Gemini for law firms.” “CoCounsel review.” “Best AI for contract review.” Variations of these queries make up the dominant share of legal AI search behavior. The pattern is stable. It does not shift much month to month.

What I do not see, almost ever, in the top queries: questions about architecture. Questions about governance. Questions about how to deploy AI safely inside a midsize firm. Questions about who owns the application layer. Questions about how to write an AI disclosure response for a sophisticated client. Those queries exist, but they are far down the long tail. They are not where the volume is. The visibility side of this question — how clients find legal services when the first place they look is an AI assistant — is its own discipline, and one most firms are not yet measuring.

That tells me something important. The demand side of the legal AI market — the actual lawyers and legal operations professionals trying to buy and deploy AI — is one phase behind the supply side. Supply has moved to Stage 4 thinking, in pockets. Demand is still mostly at Stage 1. Lawyers are asking which vendor to buy. Freshfields already answered which architecture to own.

That gap is the opportunity for firms that move now. It is also the threat for firms that don’t. Because when demand catches up — and it will, because the courts are forcing it to catch up through sanctions, because clients are starting to ask questions through procurement, because GCs are reading the same news cycles their outside counsel are reading — the question coming from clients will not be “which AI tool do you use.” The question will be: how is AI governed inside my matter? Who reviews AI output before it reaches me? Can you show the verification trail? Which systems have touched my data? Can your firm explain its AI architecture to my board, in writing, this week?

When your top client starts asking those questions and your firm is still in Stage 1 procurement mode, you do not lose the client at the next renewal. You lose the client when the next significant matter goes to a Stage 4 firm whose architecture they didn’t even know was being evaluated. That is already the direction of travel for some of the AI-native firms competing with traditional outside counsel for venture-backed clients. April just made the pattern visible at the BigLaw level.

The 14-Firm Map

To make the framework concrete, here is where named BigLaw firms appear to sit on the four-stage map as of April 30, 2026, based on public information and announcements. The map is necessarily imperfect because not every firm discloses architecture publicly, and several firms are intentionally opaque about their internal AI strategy. But the pattern is clear enough to be useful.

Stage 4 — Multi-LLM with owned application layer

Freshfields is the cleanest example. Google Cloud sits underneath, Anthropic Claude sits underneath, Freshfields Lab sits above as the firm-owned application layer, and 260 internal AI Champions distribute governance across 33 offices. The foundation model is not the strategy. The application layer is.

Stage 4-adjacent — Productized AI, internal infrastructure, or external offerings

Cleary Gottlieb, through its ClearyX subsidiary, just launched CX+ as a contract AI product sold externally. Reed Smith, through its Gravity Stack subsidiary established in 2018 and rebranded around AI in 2024, sells AI services to other AmLaw 200 firms and corporate legal departments. Macfarlanes, with 80% daily Harvey usage internally and the Amplify product that productizes AI capabilities for client subscription, has crossed from internal user to external seller. A&O Shearman, with a revenue-sharing arrangement with Harvey on agentic tools, has effectively partnered up the stack. Goodwin Procter, with its public April 27 commitment to 90% adoption by year-end, is in this category in commitment terms even if the architecture is still unclear.

These firms are not all doing the same thing. But they share one feature: AI is no longer just an internal productivity tool at any of them. It is becoming part of the firm’s product, delivery model, client strategy, or operating infrastructure.

Stage 3 — Major vendor commitments without owned application layer

CMS, with a firmwide Harvey rollout to over 7,000 lawyers across 50 countries, sits here. DLA Piper, with Harvey stacked on top of Microsoft Copilot in a dual-stack arrangement covering thousands of lawyers, sits here. Latham & Watkins, with its 3,600-plus Harvey licenses deployed firmwide in 2025, sits here. Clifford Chance, with its Microsoft and Azure OpenAI commitment going back to 2024, sits here. PwC, with 230,000 users on Microsoft Copilot at enterprise scale (although the deployment is not legal-only), sits here as relevant context for how the Big Four are running adjacent strategies.

This is serious adoption. In some cases, it is more advanced than what most law firms will achieve in the next two years. But it is still mostly vendor-led. That may be enough for many use cases, and it may even be the right intermediate step for firms not yet ready to build their own application layer. But it is not the same as owning the application layer, and the strategic implications differ.

Stage 2 — Silent giants and exposed institutions

Kirkland & Ellis is the interesting silent giant. The firm has not announced a major public AI platform deal in the same register as Latham, CMS, or A&O Shearman. But the talent flows around Harvey and Kirkland show the battleground is active — Harvey has poached Kirkland staff in past hiring cycles, and Kirkland has hired from Harvey at the partner level. That bidirectional movement suggests engagement on both sides of the deployment fence, even if the strategic posture is not yet public. Sullivan & Cromwell is now the cautionary elite example, not because it lacks sophistication but because the precise sophistication of the firm makes the April 21 incident more important than it would be at a less prestigious institution.

Stage 1 to 2 — The quiet majority

The rest of the Am Law top 50, the bulk of midsize and regional firms, the firms with committees and pilots and policies but no public architecture and no visible verification layer in their workflows. This is where most of the market still sits.

Now reverse the map. If you are a managing partner at a midsize firm — which is the segment I write for — your competition is not only the Am Law 100 directly. Your competition is the AI-native firm that will pitch your client a faster, flatter, clearer workflow at flat-fee pricing. Your competition is the Stage 4 BigLaw firm that will start including AI architecture diagrams in pitch decks for the deals that used to default to firms in your tier. Your competition is the vendor-enabled boutique that can deliver the first 40% of any matter at software speed and lawyer-reviewed quality.

If you cannot put your firm on this map honestly, that itself is the signal. The work for the next thirty days is the honest stage diagnostic, not a vendor evaluation.

What This Means for Your Firm

Three questions. If you cannot answer them in writing within the next thirty days, you do not have an AI strategy. You have a procurement contract.

1. Who owns your application layer — your firm, or your vendor?

If the answer is “we use Harvey” or “we use Copilot,” the answer is your vendor. That is not automatically wrong. Vendor-based strategies can be operationally effective in the short and medium term. But it is a hedge, not a moat. The firm that owns the application layer can swap the foundation model. The firm that owns only the foundation model contract can be swapped by the vendor at renewal. Both states are stable as long as the underlying market is stable. The problem is that the underlying market is not stable, and 2026 is going to make that obvious.

2. If your LLM provider raises prices three times at renewal, what is your move?

If the answer is “we would need to evaluate alternatives at that point,” the honest answer is that you have no move. You have a forward-looking option to make a decision under pressure when the contract is up. Freshfields’ answer to this question, structurally, is “we already operate across multiple providers, and our internal champions and application layer make switching foundation models a workflow exercise rather than a strategic crisis.” That is optionality. Optionality is strategy. Forward-looking evaluations under pressure are not.

3. If your top client demands AI disclosure tomorrow, can you produce the answer in writing today?

Not a vague answer. Not “we have policies.” A real answer. Which specific tools are deployed. Which workflows touch them. Which data boundaries apply. Which verification steps run automatically. Which human review checkpoints are required. Which audit trail is captured for which matters. Which exceptions exist and why. Which specific matters have used AI in the last twelve months and which have not.

This is not hypothetical. The Colorado AI Act is already in effect for high-risk automated decision-making in legal services. The EU AI Act high-risk provisions hit on August 2, 2026. State bar disclosure rules are expanding. Sophisticated corporate clients with their own AI governance programs — and there are now hundreds of them — are starting to push AI disclosure questions through procurement as part of standard outside counsel onboarding. By Q3 2026, the inability to answer this question in writing will not be a curiosity. It will be a procurement disqualifier.

If the honest answers to all three questions are no, your firm is in Stage 1 or Stage 2 regardless of what your AI committee minutes say. That is not a moral judgment. It is a positional judgment, and the position is unstable.

The firms that survive April’s phase change are not going to be the firms with the best AI policy on paper. They are going to be the firms that did the unglamorous work of moving governance from a policy document to workflow infrastructure. That work is not photogenic. It does not generate press releases. It is hard to pitch to a partnership that wants visible wins. That is exactly why most firms will delay it. It is also why the firms that do it will compound their advantage every quarter for the next five years.

Closing

April 2026 was not the month AI changed legal. It was the month legal stopped being able to pretend it had not.

The firms that committed to infrastructure said it out loud. The firms that did not are paying for it in court. The middle of the market is forming alliances to slow the math down. The agents are shipping in production. The demand side is catching up to where the supply side already moved.

Five years from now, when someone asks when this shift moved from inevitable to here, the honest answer may well be the thirty days I just walked you through. April 2026.

I will keep tracking this monthly.

What I am watching for next: the first serious unauthorized practice of law claim filed against an agentic legal system, or against the firm operating one. The first malpractice carrier to publicly reprice AI risk in its underwriting, with explicit reference to the gap between policy and infrastructure. The first Am Law 50 firm to publicly name its application layer the way Freshfields named Freshfields Lab — because the moment naming becomes a competitive move, the market enters its next phase. The first midsize firm to lose a flagship corporate client to an AI-native competitor in a head-to-head pitch that becomes public.

Each of those will be a phase marker for the next memo. I’ll be writing about them when they happen.

In the meantime, the work for any firm reading this is honest. Map your stage. Read your own AI policy out loud. Ask whether what you wrote is what you would actually defend in court next quarter, or in a procurement audit, or in front of a sophisticated client’s general counsel.

That is the diagnostic. Everything else, at this point, is theater.

Next Issue: The AI Sanctions Tracker

I have been quietly building a global database of every court order, sanction, and disciplinary action involving AI hallucinations or misuse in legal practice. More than 1,200 cases tracked across federal and state jurisdictions. The dataset is growing at five to six new cases per day. It is mapped by jurisdiction, sanction type, dollar amount, attorney, firm, and case disposition.

The Brigandi $96,000 sanction is one row. The S&C apology letter is another. The Cherry Hill repeat is another. There are more than 1,197 others.

Next issue, I am opening it. Not as commentary. As data.

If you are a managing partner, general counsel, malpractice carrier, legal operations lead, or AI governance committee member, this is the dataset you should already be looking at when you reprice your risk model. Subscribe below to receive next month’s memo with first-wave access details.